Welcome to the 7th edition of Gradient Ascent. I’m Albert Azout, a prior entrepreneur and current Partner at Cota Capital. On a regular basis I encounter interesting scientific research, startups tackling important and difficult problems, and technologies that wow me. I am curious and passionate about machine learning, advanced computing, distributed systems, and dev/data/ml-ops. In this newsletter, I aim to share what I see, what it means, and why it’s important. I hope you enjoy my ramblings!

Is there a founder I should meet?

Send me a note at albert@cotacapital.com

Want to connect?

Find me on LinkedIn, Angelist, Twitter

Hi everyone! I was under the weather 🤧 last week, but (thankfully) I am feeling better…

What will drive the evolution of enterprise data infrastructure?

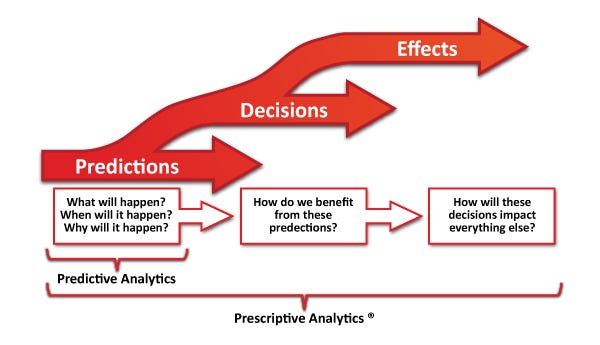

One potential answer begins with the reframing of a canonical question: “what will happen?” —> “what should we do?” The former is predictive, the latter is prescriptive. The difference is seemingly subtle, but the gap between the two is quite vast.

Prescriptive systems take advantage of upstream predictions (forecasts and extrapolations), and utilize machine learning and other methods, to provide adaptive, automated, constrained, time-dependent, and optimal decisions [source]. These systems compare alternatives and handle decision-making uncertainty, innately. They provide data provenance (i.e. reproducibility) and the ability to explore what-if scenarios (counterfactuals), quantify risk, and ascertain confidence levels. The outputs of prescriptive systems have non-linear effects, as applied in the context of other decisions, or within a sequence of decisions (i.e. reinforcement learning), or within a network of interrelated decisions or agents.

To prescribe is not trivial.

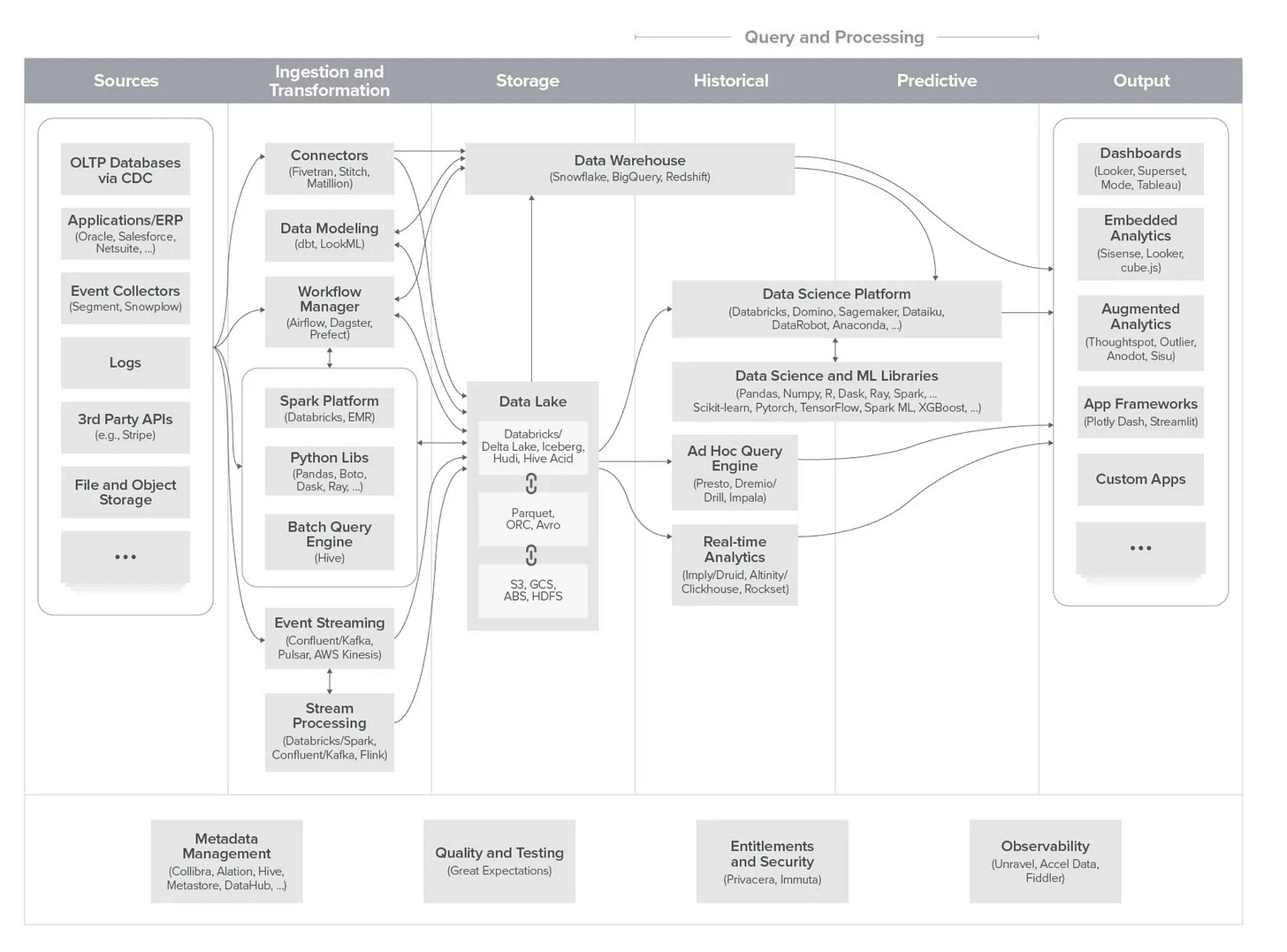

Today, modern enterprise data infrastructure is quickly converging with predictive, machine learning infrastructure. In the below (borrowed from a16z), the ingestion and transformation stages directly include (distributed) data frameworks for ML (i.e. Dask, Ray), the query and processing stages leverage a variety of data science platforms and ML libraries to train and infer from models, and the output stage includes frameworks for augmented/embedded analytics and data science application level tools. Modern data lakes in the storage layer defer transformation (i.e. ELT vs ETL), allowing for the collection of structured and unstructured data, thereby yielding to metadata management versus upfront schema definitions—creating a fertile set of data for machine learning experimentation. And data lineage/provenance and observability are adapting to address ML-centric use cases.

How will enterprise data infrastructure allow for prescriptive analytics?

Let’s first take a step back and review our progress to this point…

Data processing infrastructure and architecture is influenced and co-evolves with data characteristics: spatial (volume), temporal (velocity), heterogeneous (variety), and evolutionary (variability) features of the data created from the world. The narrative unfolded as follows:

In the early days, as the data collected grew, relational databases were split across business processes and became fragmented, creating data silos within the enterprise.

Data warehouses (DWs) were born to bring disparate relational databases under one umbrella. Early DW on-premise systems like Teradata and Vertica were expensive, proprietary, built for structured data, and optimized for read access. Eventually DW’s became cloud-based. ETL was born to bridge OLTP and OLAP.

With the growth of internet scale datasets (Big Data) came the emergence of distributed analytics (MapReduce) that could run large scale analytics on commodity hardware. These frameworks allowed for the processing of unstructured data, making companies want to collect even more data.

Data lakes emerged as a modern solution, also because ETL jobs were complex and brittle. Apache Spark then arrived for generalized distributed computation, and then more simplistic Snowflake-like systems for adhoc querying.

In parallel, streaming analytics and time series databases emerged to handle real-time streaming data, and are now an integral part of data infrastructure stacks and reference architectures (i.e. Lambda, Kappa).

If prescriptive analytics is to take hold in enterprise data infrastructure, the following will likely need to happen:

Continual convergence of analytics and ML infrastructure because prescriptive analytics will need to be more tightly coupled to allow for humans to explore data, understand predictive, and make judgements.

New constructs will emerge at the presentation/interaction layer around explainability, confidence, uncertainty, risk, counterfactuals, etc. This will require advanced data provenance, observability, lineage, quality, etc.

Adaptive control and reinforcement learning will take a more important role in enterprise machine learning, as sequences of decisions need to be assessed for their long-term utility.

Other methods outside of statistical/machine learning may be utilized (e.g. optimization, logic and graph-based models, and simulation). See image below.

Advancements in human-machine interaction (HMI) will be required as decisions need to take into account human factors like judgement, cognitive bias, explainability, exploration, etc.

More machine-generated data from IoT and the continued decentralization of the cloud, will also provide opportunities for closed-loop, autonomous decisions near the physical or digital source of data. Of course, lots of infrastructure will be required to make this happen.

The end goal of all this effort is not just to predict our world, but to alter it.

Take care everyone! 🤪

Disclosures

While the author of this publication is a Partner with Cota Capital Management, LLC (“Cota Capital”), the views expressed are those of the writer author alone, and do not necessarily reflect the views of Cota Capital or any of its affiliates. Certain information presented herein has been provided by, or obtained from, third party sources. The author strives to be accurate, but neither the author nor Cota Capital do not guarantees the accuracy or completeness of any information.

You should not construe any of the information in this publication as investment advice. Cota Capital and the author are not acting as investment advisers or otherwise making any recommendation to invest in any security. Under no circumstances should this publication be construed as an offer soliciting the purchase or sale of any security or interest in any pooled investment vehicle managed by Cota Capital. This publication is not directed to any investors or potential investors, and does not constitute an offer to sell — or a solicitation of an offer to buy — any securities, and may not be used or relied upon in evaluating the merits of any investment.

The publication may include forward-looking information or predictions about future events, such as technological trends. Such statements are not guarantees of future results and are subject to certain risks, uncertainties and assumptions that are difficult to predict. The information herein will become stale over time. Cota Capital and the author are not obligated to revise or update any statements herein for any reason or to notify you of any such change, revision or update.